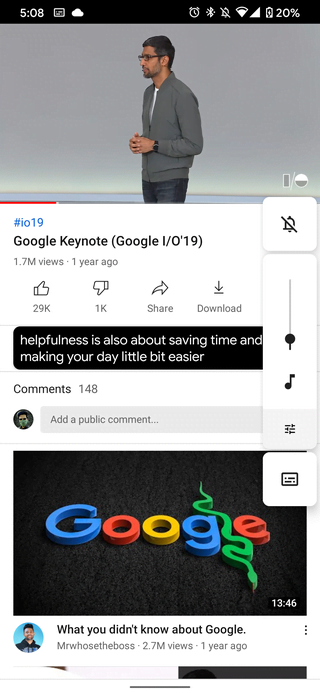

We look forward to bringing this feature to more users by expanding its support to other languages and by further improving the formatting in order to improve the perceived accuracy and coherency of the captions, particularly for multi-speaker content. Live Caption is now available in English on Pixel 4 and will soon be available on Pixel 3 and other Android devices. In order to save on computational resources and provide a smooth user experience, the punctuation prediction is performed on the tail of the text from the most recently recognized sentence, and if the next updated ASR results do not change that text, the previously punctuated results are retained and reused. As the caption is formed, speech recognition results are rapidly updated a few times per second. The text-based punctuation model was optimized for running continuously on-device using a smaller architecture than the cloud equivalent, and then quantized and serialized using the TensorFlow Lite runtime. Yet while the model is significantly more energy efficient, it still performs well for a variety of use cases, including captioning videos, recognizing short queries and narrowband telephony speech, while also being robust to background noise. To do this, Live Caption’s ASR model is optimized for edge-devices using several techniques, such as neural connection pruning, which reduced the power consumption to 50% compared to the full sized speech model. In order for Live Caption to be most useful, it should be able to run continuously for long periods of time. The ASR model is only loaded back into memory when speech is present in the audio stream again. For example, when music is detected and speech is not present in the audio stream, the label will appear on screen, and the ASR model will be unloaded. The full automatic speech recognition (ASR) RNN-T engine runs only during speech periods in order to minimize memory and battery usage. The Sound Recognition model is used not only to generate popular sound effect labels but also to detect speech periods. The produced text or sound label is formatted and added to the caption.įor sound recognition, we leverage previous work that was done for sound events detection, using a model that was built on top of the AudioSet dataset. Incoming sound is processed through a Sound Recognition and ASR feedback loop. Punctuation symbols are predicted while text is updated in parallel. Live Caption integrates the signal from the three models to create a single caption track, where sound event tags, like and, appear without interrupting the flow of speech recognition results.

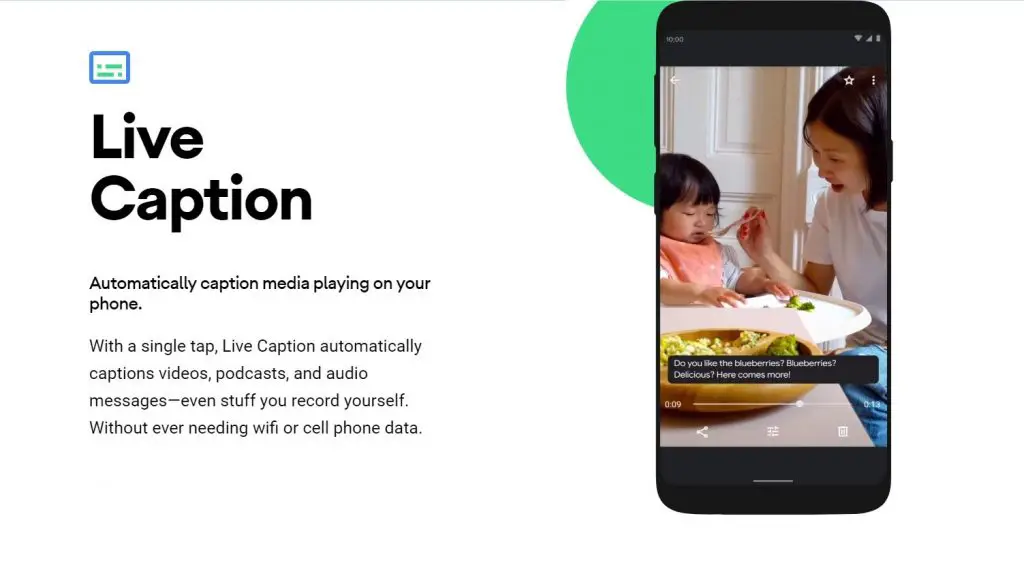

Live Caption works through a combination of three on-device deep learning models: a recurrent neural network (RNN) sequence transduction model for speech recognition ( RNN-T), a text-based recurrent neural network model for unspoken punctuation, and a convolutional neural network (CNN) model for sound events classification. When media is playing, Live Caption can be launched with a single tap from the volume control to display a caption box on the screen.īuilding Live Caption for Accuracy and Efficiency The feature is currently available on Pixel 4 and Pixel 4 XL, will roll out to Pixel 3 models later this year, and will be more widely available on other Android devices soon.

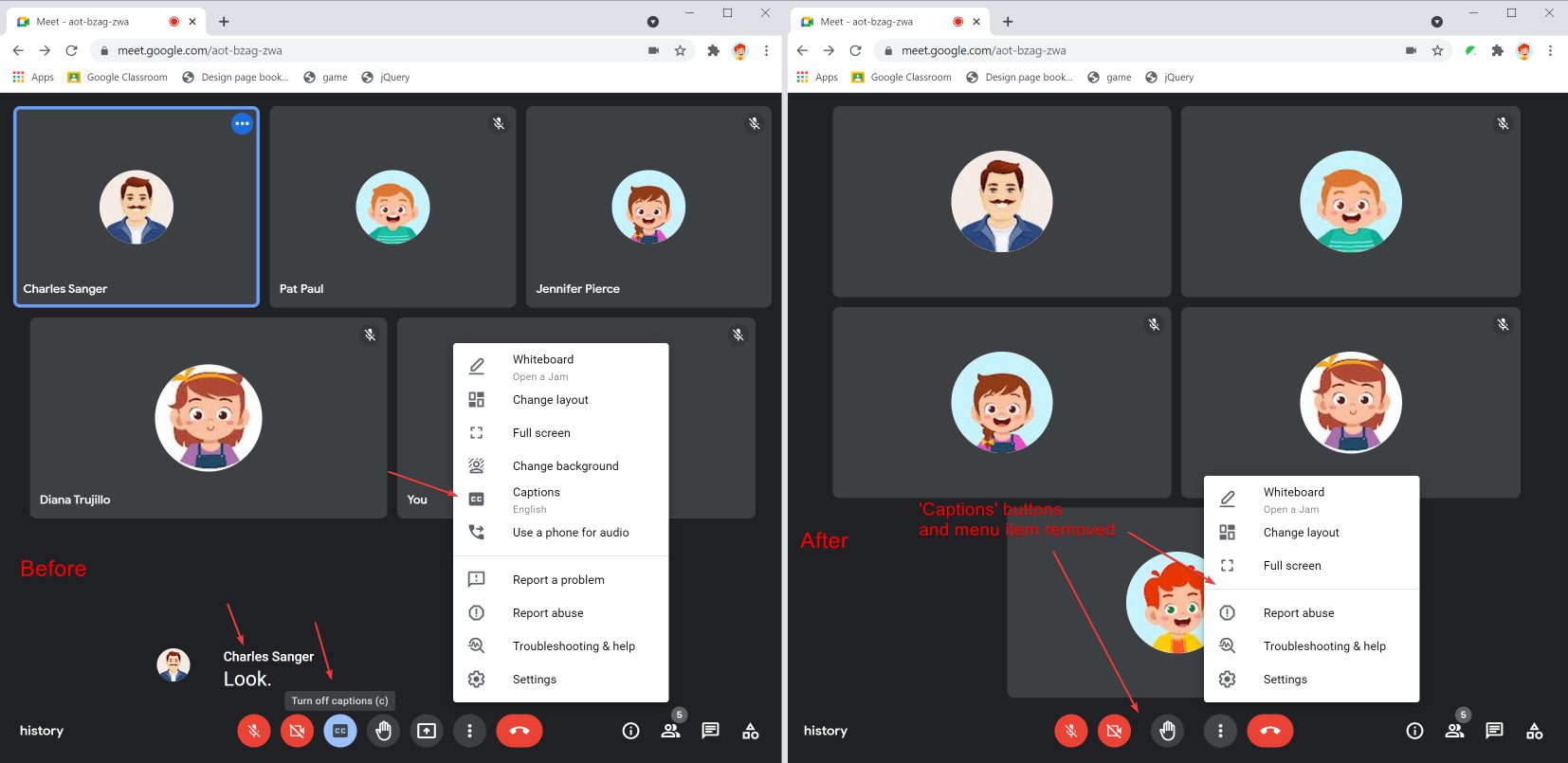

The captioning happens in real time, completely on-device, without using network resources, thus preserving privacy and lowering latency. You can go to any website and play a video and the caption will appear in a translucent black box at the bottom of the screen.Recently we introduced Live Caption, a new Android feature that automatically captions media playing on your phone. Once the required files are downloaded, the Live Caption feature is ready to use. If you are not seeing the option for Live Caption, then you will need to update your browser and the feature is new and not available on older versions. Step 5: Now, for the “Live Caption” option, toggle the switch ON.Īfter you toggle the feature On, some speech recognition files will be downloaded on your computer. Step 4: In the left sidebar, click on “Advanced” and then select “Accessibility.” Step 3: From the options available, select “Settings.” Step 2: Now, click the three-dot menu icon in the top-right of the window. Step 1: Open the Google Chrome browser on your computer, be it Windows, Mac, or even Linux. In this step-by-step guide, we will show how you can enable this feature. Currently, the future is only available in one language - English. Google Chrome itself creates captions for any video and audio content that is playing in the browser. In an effort to solve that problem, Google has started offering a new Live Caption feature for Chrome users and the feature is designed in such a way that it works on every platform and not just the company’s own.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed